Why You Can Never Argue with Conspiracy Theorists | Argument Clinic | WIRED

16 Sep 2018 Leave a comment

in economics of media and culture Tags: conspiracy theories, Karl Popper, philosophy of science

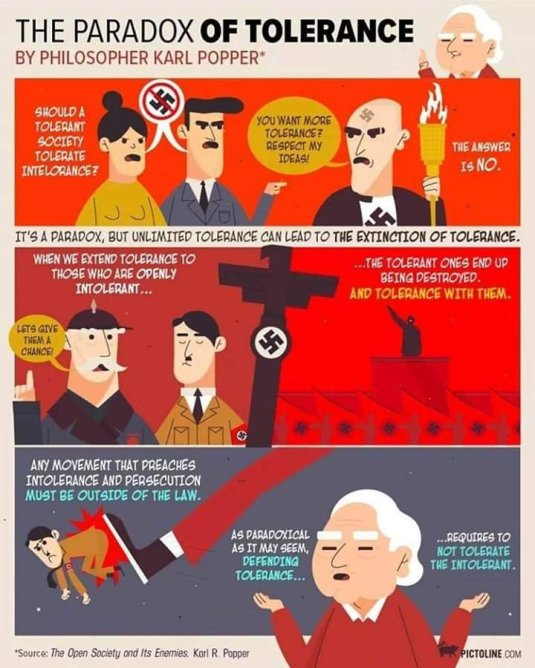

Popper said same in the Open Society and its Enemies

15 Aug 2016 Leave a comment

in constitutional political economy, economics of religion, liberalism Tags: Karl Popper, political correctness

@NZGreens are so polite on Twitter @MaramaDavidson @RusselNorman @greencatherine

12 Sep 2015 1 Comment

in comparative institutional analysis, constitutional political economy, economics of media and culture, liberalism, managerial economics, organisational economics Tags: Cass Sunstein, Daily Me, information cocoons, infotopia, John Stuart Mill, Karl Popper, New Zealand Greens, Twitter, Twitter left

One of the first things I noticed when feuding on Twitter with Green MPs was how polite they were. Twitter is not normally known for that characteristic and that is before considering the limitations of 144 characters. People who are good friends and work together will go to war over email without any space limitations for the making an email polite and friendly. Imagine how easy it is to misconstrue the meaning and motivations of tweets that can only be 144 characters.

The New Zealand Green MPs in their replies on Twitter make good points and ask penetrating questions that explain their position well and makes you think more deeply about your own. Knowledge grows through critical discussion, not by consensus and agreement.

Cass Sunstein made some astute observations in Republic.com 2.0 about how the blogosphere forms into information cocoons and echo chambers. People can avoid the news and opinions they don’t want to hear.

Sunstein has argued that there are limitless news and information options and, more significantly, there are limitless options for avoiding what you do not want to hear:

- Those in search of affirmation will find it in abundance on the Internet in those newspapers, blogs, podcasts and other media that reinforce their views.

- People can filter out opposing or alternative viewpoints to create a “Daily Me.”

- The sense of personal empowerment that consumers gain from filtering out news to create their Daily Me creates an echo chamber effect and accelerates political polarisation.

A common risk of debate is group polarisation. Members of the deliberating group move toward a more extreme position relative to their initial tendencies! How many blogs are populated by those that denounce those who disagree? This is the role of the mind guard in group-think.

Sunstein in Infotopia wrote about how people use the Internet to spend too much time talking to those that agree with them and not enough time looking to be challenged:

In an age of information overload, it is easy to fall back on our own prejudices and insulate ourselves with comforting opinions that reaffirm our core beliefs. Crowds quickly become mobs.

The justification for the Iraq war, the collapse of Enron, the explosion of the space shuttle Columbia–all of these resulted from decisions made by leaders and groups trapped in “information cocoons,” shielded from information at odds with their preconceptions. How can leaders and ordinary people challenge insular decision making and gain access to the sum of human knowledge?

Conspiracy theories had enough momentum of their own before the information cocoons and echo chambers of the blogosphere gained ground.

J.S. Mill pointed out that critics who are totally wrong still add value because they keep you on your toes and sharpened both your argument and the communication of your message. If the righteous majority silences or ignores its opponents, it will never have to defend its belief and over time will forget the arguments for it.

As well as losing its grasp of the arguments for its belief, J.S. Mill adds that the majority will in due course even lose a sense of the real meaning and substance of its belief. What earlier may have been a vital belief will be reduced in time to a series of phrases retained by rote. The belief will be held as a dead dogma rather than as a living truth.

Beliefs held like this are extremely vulnerable to serious opposition when it is eventually encountered. They are more likely to collapse because their supporters do not know how to defend them or even what they really mean.

J.S. Mill’s scenarios involves both parties of opinion, majority and minority, having a portion of the truth but not the whole of it. He regards this as the most common of the three scenarios, and his argument here is very simple. To enlarge its grasp of the truth, the majority must encourage the minority to express its partially truthful view. Three scenarios – the majority is wrong, partly wrong, or totally right – exhaust for Mill the possible permutations on the distribution of truth, and he holds that in each case the search for truth is best served by allowing free discussion.

Mill thinks history repeatedly demonstrates this process at work and offered Christianity as an illustrative example. By suppressing opposition to it over the centuries Christians ironically weakened rather than strengthened Christian belief. Mill thinks this explains the decline of Christianity in the modern world. They forgot why they were Christians.

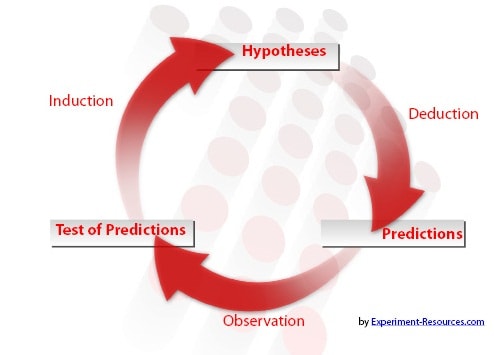

Popper on what is science

27 May 2015 Leave a comment

in liberalism Tags: conjecture and refutation, Karl Popper

Do climate scientists understand the scientific method?

13 May 2015 Leave a comment

in environmental economics, global warming, Karl Popper Tags: climate alarmism, conjecture and refutation, global warming, Karl Popper, philosophy of science, scientific method

Karl Popper argued that the three golden rules of science were test, test and test. A good hypothesis forbid certain things to occur, and the more it forbids, the better, more scientific the hypothesis is.

That is because if what the hypothesis forbids occurs, the hypothesis is refuted. Science is a set of testable propositions, propositions that can be refuted.

Why climate scientists say things like: "warming in the climate system is unequivocal." vox.com/cards/global-w… http://t.co/COb7v9Z5Er—

Vox Maps (@VoxMaps) May 12, 2015

Looking around for confirmation is an old trick of Marxists and astrologists. Once they read their sacred texts, everything around them was explained except for a few glaring anomalies that actually refuted their hypothesis:

I found that those of my friends who were admirers of Marx, Freud, and Adler, were impressed by a number of points common to these theories, and especially by their apparent explanatory power. These theories appeared to be able to explain practically everything that happened within the fields to which they referred.

The study of any of them seemed to have the effect of an intellectual conversion or revelation, opening your eyes to a new truth hidden from those not yet initiated. Once your eyes were thus opened you saw confirming instances everywhere: the world was full of verifications of the theory.

Whatever happened always confirmed it. Thus its truth appeared manifest; and unbelievers were clearly people who did not want to see the manifest truth; who refused to see it, either because it was against their class interest, or because of their repressions which were still "un-analysed" and crying aloud for treatment.

Marxists and astrologers and other pseudoscientists got around the inconvenience of repeated refutation by specifying a protective belt of axillary hypotheses, which grew with time that explained away these growing anomalies in their basic hypothesis.

Too much of current public discussion of climate science is about what particular instances confirm rather than contradict. What does the global warming hypothesis strictly forbid?

Popper argued that you look for what contradicts rather than confirm. He developed quite simple rules early in life:

These considerations led me in the winter of 1919-20 to conclusions which I may now reformulate as follows.

(1) It is easy to obtain confirmations, or verifications, for nearly every theory-if we look for confirmations.

(2) Confirmations should count only if they are the result of risky predictions;that is to say, if, unenlightened by the theory in question, we should have expected an event which was incompatible with the theory-an event which would have refuted the theory.

(3) Every "good" scientific theory is a prohibition: it forbids certain things to happen. The more a theory forbids, the better it is.

(4) A theory which is not refutable by any conceivable event is non-scientific. Irrefutability is not a virtue of theory (as people often think) but a vice.

(5) Every genuine test of a theory is an attempt to falsify it, or to refute it. Testability is falsifiability; but there are degrees of testability; some theories are more testable, more exposed to refutation, than others; they take, as it were, greater risks.

(6) Confirming evidence should not count except when it is the result of a genuine test of the theory; and this means that it can be presented as a serious but unsuccessful attempt to falsify the theory. (I now speak in such cases of"corroborating evidence.")

(7) Some genuinely testable theories, when found to be false, are still upheld by their admirers-for example by introducing ad hoc some auxiliary assumption, or by re-interpreting theory ad hoc in such a way that it escapes refutation. Such a procedure is always possible, but it rescues the theory from refutation only at the price of destroying, or at least lowering, its scientific status. (I later described such a rescuing operation as a "conventionalist twist" or a"conventionalist stratagem.")

One can sum up all this by saying that the criterion of the scientific status of a theory is its falsifiability, or refutability, or testability.

When a hypothesis is tested and fails the test because what it forbid to happen actually occurred , for example, new insights into the underlying science are frequently gained. Clinging tenaciously to correct theories leads only to a sterile science. This is the fundamental difference between science and superstition. When the facts contradict, you can learn from that refutation and grow rather than become defensive.

What academics are really saying http://t.co/e5E4H0YRqf—

Conrad Hackett (@conradhackett) February 14, 2015

Recent Comments